Canary rollout of a KServe ML model¶

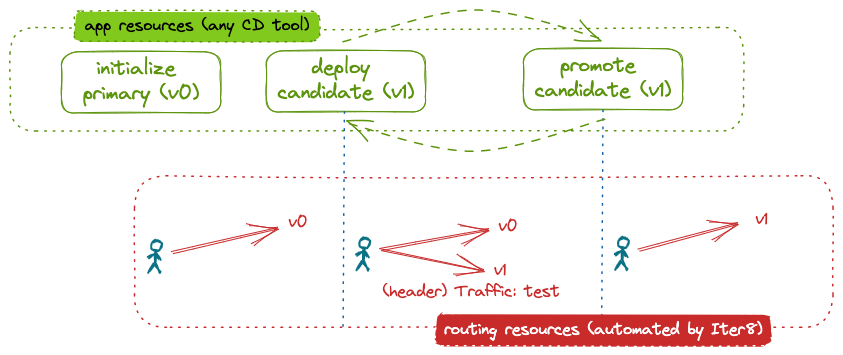

This tutorial shows how Iter8 can be used to implement a canary rollout of ML models hosted in a KServe environment. In a canary rollout, inference requests that match a particular pattern, for example those that have a particular header, are directed to the candidate version of the model. The remaining requests go to the primary, or initial, version of the model. Iter8 enables a canary rollout by automatically configuring the routing resources to distribute inference requests.

After a one-time initialization step, the end user merely deploys candidate models, evaluates them, and either promotes or deletes them. Iter8 automatically handles the underlying routing configuration.

Before you begin

- Ensure that you have the kubectl and

helmCLIs. - Have access to a cluster running KServe. You can create a KServe Quickstart environment as follows:

curl -s "https://raw.githubusercontent.com/kserve/kserve/release-0.11/hack/quick_install.sh" | bash

Install the Iter8 controller¶

helm install --repo https://iter8-tools.github.io/iter8 --version 0.1.12 iter8 controller

helm install --repo https://iter8-tools.github.io/iter8 --version 0.1.12 iter8 controller \

--set clusterScoped=true

kubectl apply -k 'https://github.com/iter8-tools/iter8.git/kustomize/controller/namespaceScoped?ref=v0.17.1'

kubectl apply -k 'https://github.com/iter8-tools/iter8.git/kustomize/controller/clusterScoped?ref=v0.17.1'

Initialize primary¶

Application¶

Deploy the primary version of the application. In this tutorial, the application is a KServe model. Initialize the resources for the primary version of the model (v0) by deploying an InferenceService as follows:

cat <<EOF | kubectl apply -f -

apiVersion: "serving.kserve.io/v1beta1"

kind: "InferenceService"

metadata:

name: wisdom-0

labels:

app.kubernetes.io/name: wisdom

app.kubernetes.io/version: v0

iter8.tools/watch: "true"

spec:

predictor:

minReplicas: 1

model:

modelFormat:

name: sklearn

runtime: kserve-mlserver

storageUri: "gs://seldon-models/sklearn/mms/lr_model"

EOF

About the primary InferenceService

The base name (wisdom) and version (v0) are identified using the labels app.kubernets.io/name and app.kubernets.io/version, respectively. These labels are not required.

Naming the instance with the suffix -0 (and the candidate with the suffix -1) simplifies the routing initialization (see below). However, any name can be specified.

The label iter8.tools/watch: "true" is required. It lets Iter8 know that it should pay attention to changes to this application resource.

You can inspect the deployed InferenceService. When the READY field becomes True, the model is fully deployed.

kubectl get inferenceservice wisdom-0

Routing¶

Initialize the routing resources for the application to use a canary rollout strategy:

cat <<EOF | helm template routing --repo https://iter8-tools.github.io/iter8 routing-actions --version 0.1.5 -f - | kubectl apply -f -

appType: kserve

appName: wisdom

action: initialize

strategy: canary

EOF

The initialize action (with strategy canary) configures the (Istio) service mesh to route all requests to the primary version of the application (wisdom-0). It further defines the routing policy that will be used when changes are observed in the application resources. By default, this routing policy sends requests with the header traffic set to the value test to the candidate version and all remaining requests to the primary version. For detailed configuration options, see the Helm chart.

Verify routing¶

To verify the routing configuration, you can inspect the VirtualService:

kubectl get virtualservice -o yaml wisdom

To send inference requests to the model:

-

Create a

sleeppod in the cluster from which requests can be made:curl -s https://raw.githubusercontent.com/iter8-tools/docs/v0.15.2/samples/kserve-serving/sleep.sh | sh - -

Exec into the sleep pod:

kubectl exec --stdin --tty "$(kubectl get pod --sort-by={metadata.creationTimestamp} -l app=sleep -o jsonpath={.items..metadata.name} | rev | cut -d' ' -f 1 | rev)" -c sleep -- /bin/sh -

Make inference requests:

or, to send a request with headercat wisdom.sh . wisdom.shtraffic: test:cat wisdom-test.sh . wisdom-test.sh

-

In a separate terminal, port-forward the ingress gateway:

kubectl -n istio-system port-forward svc/knative-local-gateway 8080:80 -

Download the sample input:

curl -sO https://raw.githubusercontent.com/iter8-tools/docs/v0.15.2/samples/kserve-serving/input.json -

Send inference requests:

Or, to send a request with headercurl -H 'Content-Type: application/json' -H 'Host: wisdom.default' localhost:8080 -d @input.json -s -D - \ | grep -e HTTP -e app-versiontraffic: test:curl -H 'Content-Type: application/json' -H 'Host: wisdom.default' localhost:8080 -d @input.json -s -D - \ -H 'traffic: test' \ | grep -e HTTP -e app-version

Note that the model version responding to each inference request is noted in the response header app-version. In the requests above, we display only the response code and this header.

Deploy candidate¶

Deploy a candidate model using a second InferenceService:

cat <<EOF | kubectl apply -f -

apiVersion: "serving.kserve.io/v1beta1"

kind: "InferenceService"

metadata:

name: wisdom-1

labels:

app.kubernetes.io/name: wisdom

app.kubernetes.io/version: v1

iter8.tools/watch: "true"

spec:

predictor:

minReplicas: 1

model:

modelFormat:

name: sklearn

runtime: kserve-mlserver

storageUri: "gs://seldon-models/sklearn/mms/lr_model"

EOF

About the candidate InferenceService

In this tutorial, the model source (field spec.predictor.model.storageUri) is the same as for the primary version of the model. In a real world example, this would be different.

Verify routing changes¶

The deployment of the candidate triggers an automatic routing reconfiguration by Iter8. Inspect the VirtualService to see that the routing has been changed. Requests are now distributed between the primary model and the secondary model:

kubectl get virtualservice wisdom -o yaml

You can send additional inference requests as described above. They will be handled by both versions of the model.

Promote candidate¶

Promoting the candidate involves redefining the primary version of the application and deleting the candidate version.

Redefine primary¶

cat <<EOF | kubectl replace -f -

apiVersion: "serving.kserve.io/v1beta1"

kind: "InferenceService"

metadata:

name: wisdom-0

labels:

app.kubernetes.io/name: wisdom

app.kubernetes.io/version: v1

iter8.tools/watch: "true"

spec:

predictor:

minReplicas: 1

model:

modelFormat:

name: sklearn

runtime: kserve-mlserver

storageUri: "gs://seldon-models/sklearn/mms/lr_model"

EOF

What is different?

The version label (app.kubernets.io/version) was updated. In a real world example, spec.predictor.model.storageUri would also be updated.

Delete candidate¶

Once the primary InferenceService has been redeployed, delete the candidate:

kubectl delete inferenceservice wisdom-1

Verify routing changes¶

Inspect the VirtualService to see that the it has been automatically reconfigured to send requests only to the primary.

Cleanup¶

If not already deleted, delete the candidate model:

kubectl delete isvc/wisdom-1

Delete routing:

cat <<EOF | helm template routing --repo https://iter8-tools.github.io/iter8 routing-actions --version 0.1.5 -f - | kubectl delete -f -

appType: kserve

appName: wisdom

action: initialize

strategy: canary

EOF

Delete primary:

kubectl delete isvc/wisdom-0

Uninstall Iter8 controller:

helm delete iter8

kubectl delete -k 'https://github.com/iter8-tools/iter8.git/kustomize/controller/namespaceScoped?ref=v0.17.1'

kubectl delete -k 'https://github.com/iter8-tools/iter8.git/kustomize/controller/clusterScoped?ref=v0.17.1'