SLO validation using custom metrics (single version)¶

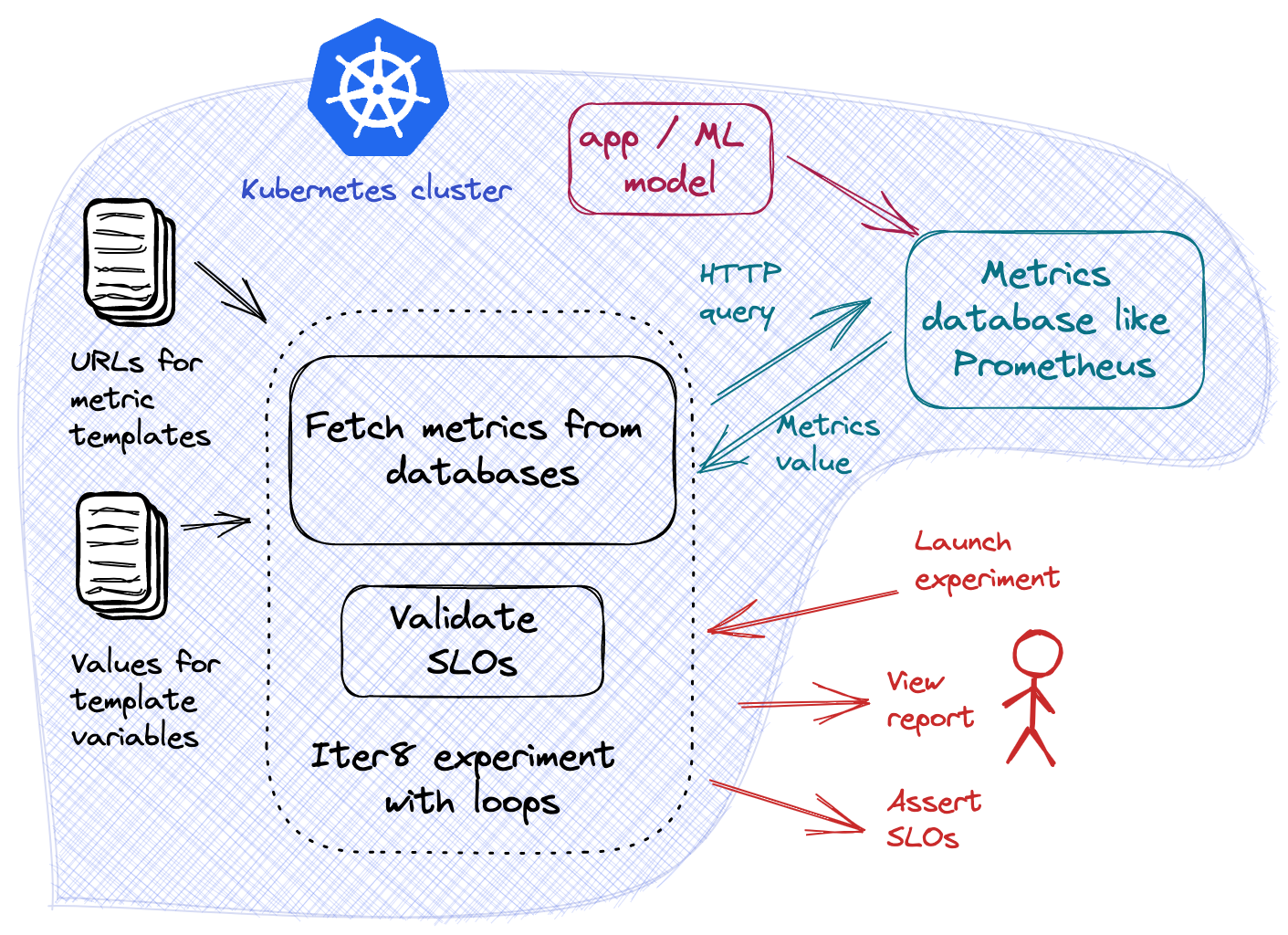

Validate SLOs for an app by fetching the app's metrics from a database (like Prometheus). This is a multi-loop Kubernetes experiment.

Before you begin

- Try your first experiment. Understand the main concepts behind Iter8 experiments.

- Install Istio.

- Install Istio's Prometheus add-on.

- Enable automatic Istio sidecar injection for the

defaultnamespace. This ensures that the pods created in steps 5 and 6 will have the Istio sidecar.kubectl label namespace default istio-injection=enabled --overwrite - Deploy the sample HTTP service in the Kubernetes cluster.

kubectl create deploy httpbin --image=kennethreitz/httpbin --port=80 kubectl expose deploy httpbin --port=80 - Generate load.

kubectl run fortio --image=fortio/fortio --command -- fortio load -t 6000s http://httpbin.default/get

Launch experiment¶

iter8 k launch \

--set "tasks={custommetrics,assess}" \

--set custommetrics.templates.istio-prom="https://raw.githubusercontent.com/iter8-tools/hub/main/templates/custommetrics/istio-prom.tpl" \

--set custommetrics.values.labels.namespace=default \

--set custommetrics.values.labels.destination_app=httpbin \

--set assess.SLOs.upper.istio-prom/error-rate=0 \

--set assess.SLOs.upper.istio-prom/latency-mean=100 \

--set runner=cronjob \

--set cronjobSchedule="*/1 * * * *"

About this experiment

This experiment consists of two tasks, namely, custommetrics, and assess.

The custommetrics task in this experiment works by downloading a provider template named istio-prom from a URL, substituting the template variables with values, using the resulting provider spec to query Prometheus for metrics, and processing the response from Prometheus to extract the metric values. Metrics defined by this template include error-rate and latency-mean; the Prometheus labels used by this template are stored in labels; all the metrics and variables associated with this template are documented as part of the template.

The assess task verifies if the app satisfies the specified SLOs: i) there are no errors, and ii) the mean latency of the app does not exceed 100 msec.

This is a multi-loop Kubernetes experiment. Hence, its runner value is set to cronjob. The cronjobSchedule expression specifies that each experiment loop (i.e., the sequence of tasks in the experiment) is scheduled for execution periodically once every minute. This enables Iter8 to refresh the metric values and perform SLO validation using the latest metric values during each loop.

Assert experiment outcomes¶

Assert that the experiment encountered no failures, and all SLOs are satisfied.

iter8 k assert -c nofailure -c slos

Sample output from assert

INFO[2021-11-10 09:33:12] experiment has no failure

INFO[2021-11-10 09:33:12] SLOs are satisfied

INFO[2021-11-10 09:33:12] all conditions were satisfied

View experiment report and logs, and cleanup as described in your first experiment.

Some variations and extensions of this experiment

- Perform SLO validation for multiple versions of an app using custom metrics.

- Define and use your own provider templates. This enables you to use any app-specific metrics from any database as part of Iter8 experiments. Read the documentation for the

custommetricstask to learn more. - Alter the

cronjobScheduleexpression so that experiment loops are repeated at a frequency of your choice. Use use https://crontab.guru to learn more aboutcronjobScheduleexpressions.